July 01, 2025 | 3 MIN READ

Read More

Pepperdata Helps Karpenter Work Better

Running Kubernetes on AWS? You're probably using Karpenter, the open-source autoscaler that dynamically provisions new instances as your...

May 15, 2025 | 7 MIN READ

Why Manual Tuning Fails: A Better Way to Optimize Kubernetes Workloads

Read More

February 28, 2025 | 7 MIN READ

The 5 Reasons to Buy (And Not Build!) Your Cost Optimization Solution

Read More

January 13, 2025 | < 1 MIN READ

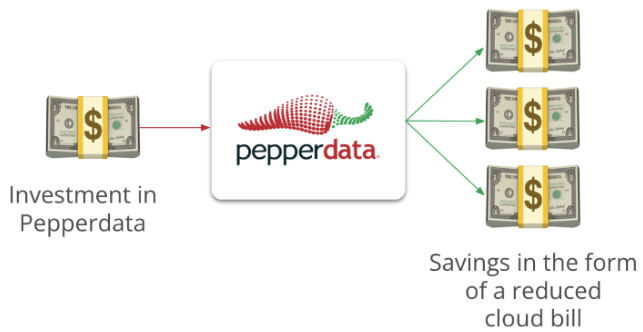

How a Global Technology Firm Realized Up to 25% Cost Savings

Read More

June 27, 2025 | 6 MIN READ